Motion Restoration A Method for Object and Global Motion

Estimation

Jih—Shi Sut, ilsueli-Ming Hangf, afl(I David \V. Linf

tDept of Electronics Engineering and Center for Telecommunications Research N ational Chiao Tung University

llsinchu, Taiwan 30050, RC

Bellcore

445 South Street

Morristowii, NJ 07960-6438, USA

Abstract

A new technique called ixiotion restoration nletho(1 (MRM) for estiiriating the global rriotion (lue to zoom and pan of the camera is 1)roPosed. It is cOnìl)osed of three steps: (a) block—matching motion estimation, (b) object assignment,

and (c) global motion restoration. In this iethod, each iniage is first divided into a number of blocks. Step (a)

Inay employ any suitable block—matching motion estiination aigorithni to I)roduce a set of motion vectors which cal)ture the coIpoun(l effect of zoom, pan, and object movement .Step (1)) groups the blocks which share common global motion characteristics into one object. Step (c) then extracts the global motion parameters (zooin and pan) corresponding to each object from the coiripound iiiotion vectors of its constituent blocks. The extraction of global

rnotioii I)ara1eter5 is accoIplishe(l via singular value (lecOnulI)OsitiOfl (SVD). Experiinental results shov that this new

techni(Iue is efficient in reducing the entropy of the l)lOck ruotion vectors for both zooming and panning motions and niay also l)e used for image segmentation.

ICeywords: rriotion restoration, object assigniiient, central projection, singular value decoinposition

1 Introduction

IVlotion estiniation plaYs an iniportant role in video data conipression which exploits the high tefl1I)oral reClUfl(laflcy

between successive fraiiies of a video sequence to achieve high compression ratio. It is also use(l in segmentation of

images for coiiiputer vision applications. The iiiost coninion technique of iiiotion estiiiiation eIfll)lOyed in video coding is block rriatching[1]—[3] . In this techiiique, a single niotioii vector is estimated for each image l)lock by comparing the current—fraiiie iniage l)lock to the 1)locks in the pre\7ioils fianie that correspoil to (lifferent (lisplace1ent vectors. Andthe displacement vector that inininiizes a predeterini ned erroi ciitenon is chosen. Theassuinption underlying

block-matching motion estimation is that all the pixels inside a block are undergoing the same translational motion. As a result, this apj)rOach niay generate a significant 1)roportion of motion vectors that do not correspond to true motion. This imprecise estimation will increase the prediction error and reduce the compression ratio. Therefore, methods that can cope with more general forms of motion (including translation, zoom, pan, and deformation) have

been the focus of a great (lea! of research in recent years [4J—{7].

We propose a method called moizonresioralionto estirriate local as well as global motion. This method

consists of three steps: (a) l)!ock-matching motion estimation, (b) object assignment, and (c) global motion restora-tion. The first step estimates translational rriotion in a block-by-block fashion and it may employ any appropriate block-matching algorithm (BMA). The two remaining steps then extract the zooming and panning components from

the block motion vectors obtained in the first step. The entropy in the motion vectors is thereby reduced. As a

result, we can reduce the arriount of data to be transinitted. Or we may use a smaller l)lOck size so that the amount

of data is not reduced but the BMA could yield a more accurate estimate to start with, alleviating the inaccuracy

problem associated with traditional block-based motion estimation.

This paper is organized as follows. In Section 2, we give a mathematical description for general global and ol)ject motion. In Section 3, the proposed motion restoration method (MRM) is derived. Section 4 is devoted to the presentation and (liscllssiofl of experimental results. Section 5 is the conclusion.

2 Mathematical Description of Global Motion

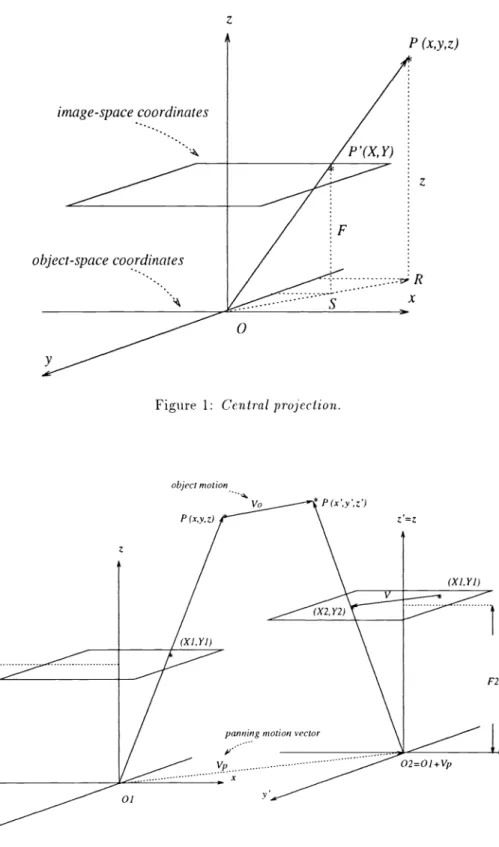

To match the mechanism of ordinary video cameras, we use central projection to model the motion traces on the recorded images caused l)y object or cairiera movement (i.e., zoom, pan, etc.). Figure 1 illustrates our model. P is a 1)OiIlt of interest on an object. Let

( x, y, z) = ol)ject-space coordinates of the point P,

( X, Y) =image—planecoordinates of the image point P', and

F =

z-coordiiiateof the image-plane in object-space.Based on similarity l)etweell the triangles OPR and LOP'S, we have

FOSY

1z OR y

H

Therefore,

Y F.

(2)Similarly, we also have

X=F.

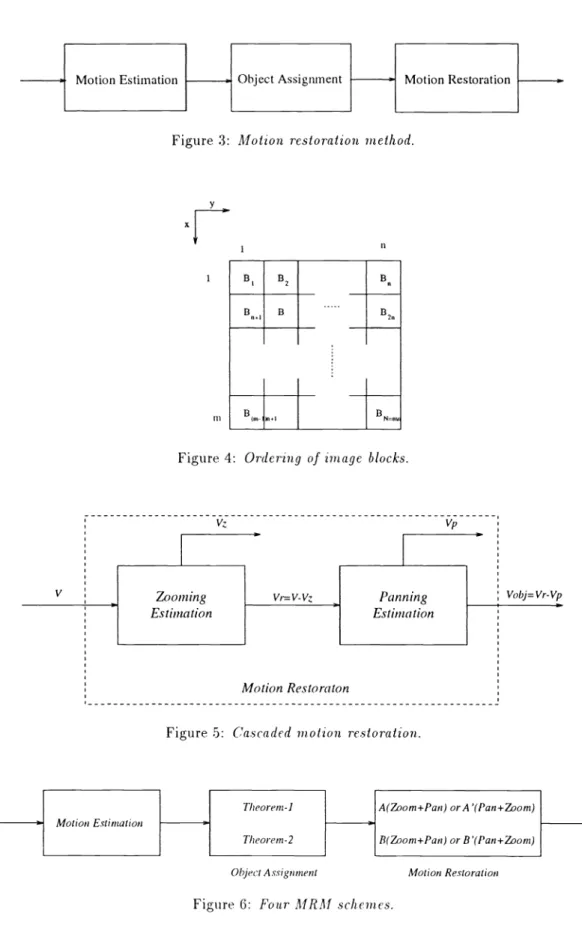

(3)A general moverrient consisting of zoom, pan, and object motion is (lel)icted in Figure 2. Let 1/ be the dis-placerrient vector of point P and let V0, V0,, and V0 be its x-directional, y-directional and z-directional components,

respectively. Geometry then gives

I

1

Y2y'(y+VoyVpy)

, (4)where (X2, Y2) is the projection of P(x', y', z') on the image plane and (V, V,) is the panning vector of camera

(or,equivalently, image coordinates). Now note that (from Equations (2) and (3))

x=—X1,

y=fYi.

(5)Inserting Equation (5) into Equation (4), we obtain

(

— F_)/2

) X2_Xi+Vor-V,x)

1 'y — _E.2_._( 2 1/ TI I.. 2 —':r;- i -r oy

YpyTherefore, the corresponding vector (Vi, V,) on the image plane due to the combination of object and camera

movement is

I

X2Xi(1)Xifr7p+frVo

(7)For simplicity, we rewrite Equation (7) as

I

v

= zx1 + P1/b + vor

(8) 1.Vy=ZYl+PV)y+VOy

where 7 — ILaF,z+V0

'=-*

ox — U — Voy — z+V0VoyThefirst term in the righthand side of Equation (7) is clue to camera zoom. The second term is caused by pan.

And the third term is the projection of the object's movement on the image plane. In the next section, we derive a method to restore the motion components, i.e., the pan, zoom, and object motion parameters.

3

Motion

Restoration Method (MRM)

The architecture of the motion restoration method is shown in Figure 3. It consists of three steps: 1. Motion Estzinalion:

In this stel), the motion vector of each block of an image is obtained using a suitable BMA (e.g., full search, 3-step search, or others). In our simulation, we use the full-search BMA to estimate these motion vectors. The resultant motion vector field forms the basis of the following two steps.

2. Object Asszgnment:

We assign the the blocks that share certain common global motion characteristics to the same object. The

assignment criteria are summarized in two objecl-asszgninenl theorems to be described later.

3. Motion Resloralion:

The motion components due to camera zooming and panning are extracted in this step. Hence, the object

movement is sel)arated from the camera motion.

3.1 Object Assignment

For simplicity, images are (livi(led into blocks and each block is viewed as a single computational unit. Thus, let

(X1 ,Y1)in Equation (8) refer to the center of a block. Let A and B I)e two image blocks. According to Equation (8), we have

I

VA ZAXA+'PAVpx+VoxA(9)

VyA ZA YA + 'PA V + V0A

and

I

VB

ZBXB+TBVpx+VoxB ioVyB ZBYB + TBVpy + V0yB

If these two blocks belong to the same object, then the corresponding motion parameters (Z, 'P V0) will be equal. Assuming this is true, we subtract Equation (10) from Equation (9) and obtain

VrA VB XA XB

yA yB A B

Therefore, we have the following objecl-asszgninenl theorem.

Theorem 1 If zoom motion exists (2 is nonzero) and two blocks 1 and belong to the same object, then

vJ1—vx2—x1—x2

Vy12 Yl—Y2'

where(

xi

,Y1) = the central coor(ljnates of block i,(V,,, V) = the observed motion vector in the image plane.

Theorem 1 is valid under rather general conditions; that is, when both zoom and pan exist. Assume that

only panning exists, then Equations (9) and (10) become

f

V

= PAVPX+VOXA12

VyA = TA ')y + V0A

(

and

f

V

= 'PBVpx+VoxB1. VyB = PBVpy+YoyB

If the two blocks belong to the same object, then

JVXA = VB

14VyA = VyB

Thus we obtain the second ob3ect-asszgnnient theorem.Theorem 2 If zoom moLion does nol exist and the Iwo blocks A and B belong to the same object, then

I

VA

VBVyA VyB

Based on the above object-assignment theorems, the I)locks in the whole image can be grouped into a numl)er of objects. The blocks that belong to the same object have the same global and object motion vectors. Therefore, for au object containing p blocks, we have

vs1 = zxi +Pv; + v0

vy1 = zYl + PvPY + Voy

, (15)

vxp = zxp + 7' + vor

vyp=

zYp +'Pv;) +Voywhere 2 and P are identical for all the p blocks. This set of linear equation can be abbreviated as AW = b.

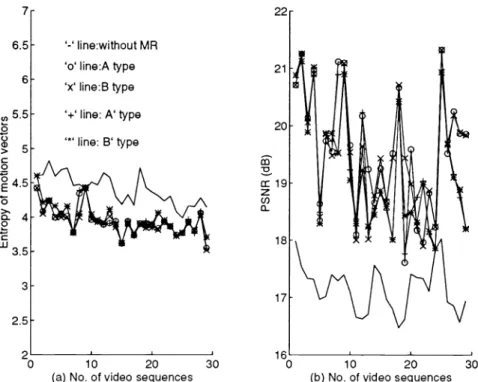

In object assignment, we first index each block in an image in ascending numerical order as shown in Figure 4. The blocks are denoted B, i = 1, .. . , N. We then invoke the following procedure.

Step 0: Set j=1. Let all blocks be unmarked.

Step 1: Among all unmarked blocks, choose the one with the smallest index as the reference block and

denote it Brej . Markthis block and assign it to object j

Step 2: For each remaining unmarked l)lock, test it against Brej for Equality (11) or (14). If equality holds,

then mark it and assign it to object j.

Step 3: If all blocks are marked, then sto1). Otherwise let j=j+1 and go to Step 1.

In the next subsection, we discuss how motion restoration is performed on the "objectized" image to compute the global motion parameters for each object.

3.2 Cascaded Motion Restoration

One way to implement the motion restoration l)lock shown in Figure 3 is to decompose it into two cascaded

sub-steps for separate zooin and pan estimation as depicted in Figure 5. This figure shows that the motion vectors of

an arbitrary object are first processed for zooming estimation which extracts the zoom vector V from the motion vector V. The difference vector 1/,.

V V 5

thenprocessed for pan1ing estimation afl(l is separated into a pan vector V and an object motion vector V0b = Vr v;). The two estimation sub-steps may 1)e reversed to yield a pan-plus-zoom (P+Z) architecture instead of the depicted zoom-plus-pan (Z+P) architecture. The overall organization of the complete motion restoration process can therefore have a number of variants. The four that we considered are denoted as schemes A, A', B, and B', respectively, in Figure 6. Schemes A and A' employ Theorem 1 in ol)ject assignment, while schemes B and B' employ Theorem 2.We next describe in more detail how each sul)-step in the cascaded motion restoration can be performed, assuming a Z+P architecture. Equations for the P+Z architecture can be similarly derived.

a. Zoomzng estzinalzon

Assuming'PV + V0 = V1., we can rewrite Equation (15) as

V7,1

=

ZX1+Vrxvy1 = ZYi+Vry

. (16)

vxp = ZXp+Vra,

1/ = ZYp+Vry

The above equation can be expressed in matrix notations as

A14/ = b,

wherex1 1 0

A -

2X10

Y10 1

}') 0 1wz =

[Z, Vrx, Vry]T, zrid Tb =

—---,

p pUsing the singular value dccompostzon (SVD) technique[8], we can obtain the solution as

W = Atb.

After removing the zooming factor Z, Vr = (V, V,.,,) is passed to the next sub-step. b. Pannzn.g eslimalion

From the result of zooming estimation, we remove the Z component in Equation (15) afl(1 obtain

PVjr+Vox

ry —

- py+

oy(17)

VrT =

Vry = 7'Vpy+Voy

This can be rewritten as

AW =

where

1100

A=

0011

T wp = [72) ,VoJ:, Vpy,V0J ,CLfld T b =—-

VJ: ,. . . Vx,Vy p pApplyingSVD again, we can obtain the panning vector from

wp=

4 Experimental Results

The proposed algorithm is tested on a variety of image sequences. We present the results from using the flower

garden and the table tennis sequences. Each sequence contains 30 1)ictures at a resolution of 720x480 per picture. The flower garden sequence contains panning activity only, whereas the table tennis sequence has individual object movement as well. Besides the four MRM schemes OUtline(1 previously, we also consider a zero-forczng(ZF) MRM

in which the object motion vectors are set to zero. This aI)I)roach is l)ased on the assuml)tion that object motioii vectors do not affect significantly the estimate(l zoom an(1 pan vectors and hence can be neglected in their estimation.

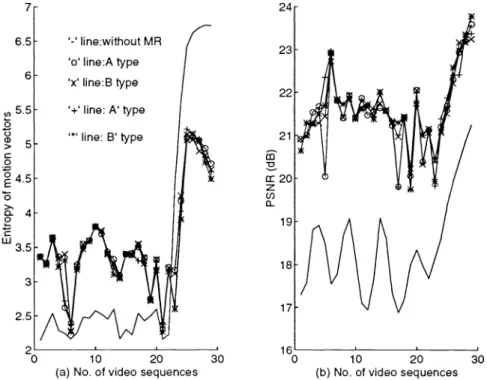

The numerical results are summarized in Figures 7—10, in which we compare the entropy of the block motion

vectors as well as the PSNR before and after motion restoration. The entropy values are coITlI)llted frame-by-frame using the statistics of each frame se)arate1y. In addition, since the block motion vectors after the extraction of global motion coml)Oflents may iiot be integers, they are quantized prior to entro)y coml)utation. The block size is 16 x 16 in all experiments. The figures show that the MRM can reduce the entropy of the block motion vectors and increase the PSNR. Interestingly, the ZF MRM is found to significantly outperform other MRM schemes in both entropy reduction and PSNR gain in some cases.

For the flower garden sequence, Figure 8 shows that schemes A' and B' yield a higher compression ratio than schemes A and B. This is intuitively reasonal)le since the sequence contains pan motion only, and schemes A' afl(I B' con(luct panning estiniation first while schemes A an(l B do zooming estimation first. As a result, schemes A and B may produce incorrect zooiing vectors an(l thereby result in a higher (listortion in the subsequent )anuiflg estimation. In the case of the table tennis sequence, there is no significant global motion before the 23rd frame, at which camera zoom commences. This causes the MRM to produce an increase in the entropy of the motion vectors forthe first 22 frames. This undesirable anomalyof the MRM can be avOi(le(I l)y developing an improved method or by turning off the MRM in a(lverse conditions.

From coding experiments on different video material with a CCITT 1-1.261-type coder, we note that the

amount of motion information can vary from 10% to over 20% of the total compressed video data. Therefore,

depending on the video material, the bit-rate saving from the above entropy reduction can be quite significant.

5 Conclusion

We gave a mathematical model describing global motions in an irriage sequence. Based on this model, the motion restoration method (MRM) was derived which can restore the zoom, pan, and object motion vectors in an image. Four variants were considered, plus one which forces the object motion vectors to zero (the zero-forcing MRM). Simulation results show that, for images containing both panning and zooming, the proposed method can achieve

roughly 30% to 40% of entropy reduction in the block motion vectors. And the zero-forcing MRM can be quite

advantageous compared to the four more elaborate alternatives.

Due to the object-assignment step, the rriethod is inherently hierarchical. The proposed object-assignment

techniciue can also be used for image segmentation in various applications such as computer vision and pattern

recognition.

References

I 1] H. G. Musmann, P. Pirsch, and H. Grallert, "Advances in picture coding," Proc. IEEE, vol. 73, no. 4, pp. 523—548, Apr. 1985.

[2] A. Zaccarin and B. Liii, "Fast algorithm for block motion estirriation," Proc. IEEE IGASSP'92, pp. 111449—111452.

[3] S. lu, "Comparison ofrnotion compensation using different degrees ofsub-pixel accuracy for interfield/interframe

hybrid coding of IIDTV image sequences," Proc. IEEE IGASSP'91, pp. 111465—111468.

[4] V. Sefericlis, "Three dimensional block matching motion estimation," Electron. LetL, vol. 28, pp. 1770—1772, August 1992.

{ 5] J. Konrad an(l E. Dubois, "Estimation ofimage motion field: Bayesian formulation and stochastic solution," in

Proc. IEEE ICASSP'88, pp. 1072—1075, Apr. 1988.

[6] S. F. Wu and J. Kittler, "A differential method for simultaneous estimation of rotation, change of scale and

translation," Signal Process.: Image Commun., vol.2, pp. 69—80, 1990.

[7] M. better, "Differential estimation of the global motion parameterszoorri and pan," Signal Process., vol. 16,

PP 249—265, 1989.

[8] 0. H. Golub and C. F. vanLoan, Matrzx Computations. TheJohns Hopkins University Press, 1989.

1878 ISPIE Vol. 2308

z

Figure 1: Central projection.

P (x,y,z) z R image-space coordinates

0

object motion P(xy,z)I

F) Ipanning motionvector

02=O1+Vp P2

Figure 3: Motion restoratzon method.

x

1-fl U B1 B2B

B1 B B2L

IFigure 4: Orderzng of zmage blocks.

[

Theore,n-1 A(om+Pan)A/lotionEstimation f

Theore,n-2

L0111+Pan)or B (Pan+Zoo,n)

Object Asslgn,nent Motion Restoration Figure 6: Four MRM schemes.

SPIE Vol. 2308 / 1879 Figure 5: Cascaded motion restoration.

1880 / SPIE Vol. 2308 7 6.5 6 (I) 0 C) CD > C 0 0 0 3.5 '-' line:without MR '0' line:A type 'x' line:B type '+' line: A' type line: B' type

(a) No. of video sequences (b) No. of video sequences

Figure7: Entropy reduction and PSNR gain for the flower garden sequence with motion restoration.

'-' line:without MR 'o' line:A type

'x' line:B type '+' line: A' type line: B' type V cc

z

(I)0

30 10 20 10 20(a) No. of video sequences (b) No. of video sequences

24 cr20

z

(-0 a-10 20 30"0

10 20(a) No. of video sequences (b) No. of video sequences

Figure9: Entropy reduction and PSNR gain for the table tennzs sequence wzth motzon restoration.

SPIE Vol. 2308 / 1881 '-' line:without MR '0' line:A type 'x' line:B type '+' line: A' type line: B' type 23 22 19 18 30 '-' line:without MR '0' line:A type 'x' line:B type '+' line: A' type '*' line: B' type 30 10 20 10 20

(a)No.of video sequences (b) No. of video sequences