2006IEEEInternational Conference on Systems, Man, andCybernetics

October 8-11, 2006,

Taipei,

TaiwanComputer

Music Composition Based

on

Discovered Music

Patterns

Shih-Chuan Chiu and

Man-KwanShan

Abstract-Computer musiccomposition hasbeen the dream ofthecomputermusic researcher.Inthis paper,weinvestigated theapproach to discover the rules of music composition from given music objects, and automatically generate a new music object style similar to the given music objects. The proposed approach utilizes the data mining techniques to discover the rules of music composition characterized by the music properties, music structure, melody style and motif. A new music object is generated based on the discovered rules. To

measure the effectiveness of proposed computer music composition approach, we adopted the method similar to the Turing test to test the discrimination between machine-generated and human-composed music. Experimental results showed that it is hard to discriminate. Another experiment showed that thestyleofgeneratedmusicis similar

tothegiven musicobjects.

1. INTRODUCTION

COMPOSING music byformal processes of machine has been investigated for a long time. Current research on

computercompositionmaybeclassifiedinto twoapproaches according tothe wayofcomposition rule generation. Inthe explicit approach, the composition rule is specified by humans while in theimplicit approachthecompositionrule is learnedfromsample music. Training data isrequired, in the implicit approach,todiscover the composition rules. Inthis paper, we investigated the implicit approach of computer music composition based on the discovered music patterns fromtrainingdata.Thedeveloped approachwill take a setof user-specified music as input and generate the music with musicstylesimilartotheuser-specifiedmusic set.

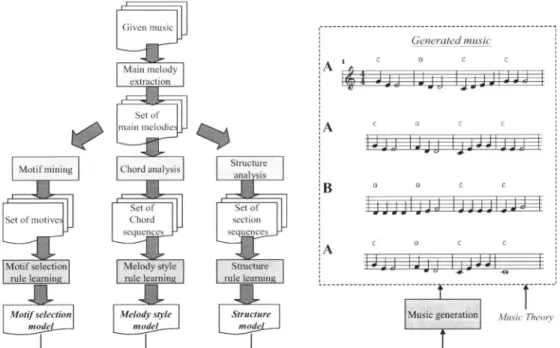

There are four design issues regarding the implicit approach, feature extraction, feature analysis, rule learning and music generation, as shown in Figure 1. Feature

extractionconcernstheextractionoflow-levelmusic features from sample music. Feature analysis obtains the high-level semantic information from low-level music features. Rule learningdiscovers thepatterns (compositionalrules) interms

of thehighlevelsemantic information from thesetofsample music. Music generation employsthe discoveredpatternsto

generatemusic.

Theprocess ofpopularmusic production consists oftwo

major steps, composition, arrangement and record. Composers create original melody with chord in the basic

Shih-ChuanChiuis withtheDepartmentofComputerScience,National Chiao Tung University, Hsinchu, Taiwan 300, ROC (e-mail:

g9222gcs.nccu.edu.tw).

Man-KwanShan is with theDepartmentofComputerScience,National

Chengchi University, Taipei City 11605, Taiwan ROC (e-mail: mkshan@cs.nccu.edu.tw).

structure. Arrangers rewrite and adapt the original melody written by composers by specifying harmonies, instrumentation, style, dynamics, sequence, et al. After these two steps, performance, recording, mixing, and audio mastering are conducted to produce the music.

Existing work on the implicit rule approach generates the melody only, ignoring the consideration of chord. In this paper, we proposed a new framework addressing music composition with theconsideration ofboth melody and chord. Especially, the proposed framework is developed based on thedata miningtechniques.

Inthenextsection, we review previous work on computer music composition. Section 3 gives the system architecture and featureextraction of proposed approach. Feature analysis andrule

learning

isdescribed in section 4. Section 5presents the music generation method. Experiments are shown in section 6. The conclusion is made in section 7.Explicit Rule

Parameter music

I

lImplicit

RuleMuic Feature ,Feature, Rule

database extraction analysis learning Fig. 1.Flowchart ofcomputermusic composition.

II. RELATED WORK

Early work on computer music generation focuses on the explicit approachthat the compositionrules are

specified

by composer. Examples are threeapproaches introduced in the classical book ofcomputer music "Composing Music with Computers," where the probabilitymodel,

grammar model and automata model are employed to model the music composition rules elicited from musicians [9].Recent work on computer music composition tries to

developtheimplicit ruleapproach.D.Copeseparateda setof music into smallsegments. Anewmusicobject isgenerated by analyzing and combining these small segments [2]. Y.

Marom used Markov Chain to model melody [8]. At the IRCAMresearchcenter,S.Dubnovetal.constructedamodel

forsimulatingthe

performed

styleofgreatmasterbyutilizingGeneratedmusic

C G C

A C3^ci . .

LED do I;J alJIJ

A c G C C G _ C C 1~~~~~~~~~~c C G C C 'A --- --- -

---sic:gge ....ion MutsicTheory Fig.2. The system architecture and process flow of theproposed approach.

tree (PST) coming from statistics and information theory

areas[3].AtCMU,B.Thomproposedareal-time interaction

systemthatgenerates aresponse soloaccording toasolo of

userandplay style model. The systemmodelsplay style by usingthe concept ofanexpectation-maximizationalgorithm

totrainalot ofbars [12]. In recentyears, M. M.Farboodat MIT proposed an assistant composition system which

generates musicbytheconceptofpainting [4].

III. SYSTEM ARCHITECTUREANDMAINMELODY EXTRACTION

Figure 2 shows the system architecture and the process

flow ofourproposedmusicsystem. Givena setof music in

MIDI format, the main melody extraction component extracts the main melody and associated features for each musicobject. Then, each extractedmelody isanalyzedby the motifminingcomponent,thechordanalysiscomponent,and the structure analysis component respectively. The motif miningcomponentfinds the setof motives which constitute the candidates for the motif selection learning component.

The chord analysis component produces the set of chord

sequences for the melody style learning. The structure

analysis component generates the set of section sequences

for structure learning. After these analysis and learning

processes,three models, musicstructuremodel, melody style

model and motif selection model are established. In the

music generation component, a new music object is

generatedbasedonthesethree models.

Melody is the essential element for music composition.

The mainmelodyextractioncomponentconsists of two steps. In the first step, quantization corrects the onset time and duration of notes. This comes from the fact that in some music of MIDIformat, it is possible that notes do not appear in appropriate position. The next step extracts the

monophonic melody from the polyphonic melody. Uitdenbogerd et al. [13] have presented the melody

extraction methods, namely, All-mono, Entropy-channel, Entropy-part and Top-channel. According to their experimental result,All-monoobtainsthebestaccuracy. The basic idea of All-mono is tomergealltrackscontained in a MIDI file. The mainmelodyis extractedby keepingthenote with the highest pitch from those pitches occurring at the sametime.

IV. ANALYSISANDLEARNING 4.1 MusicStructureAnalysisandRuleLearning

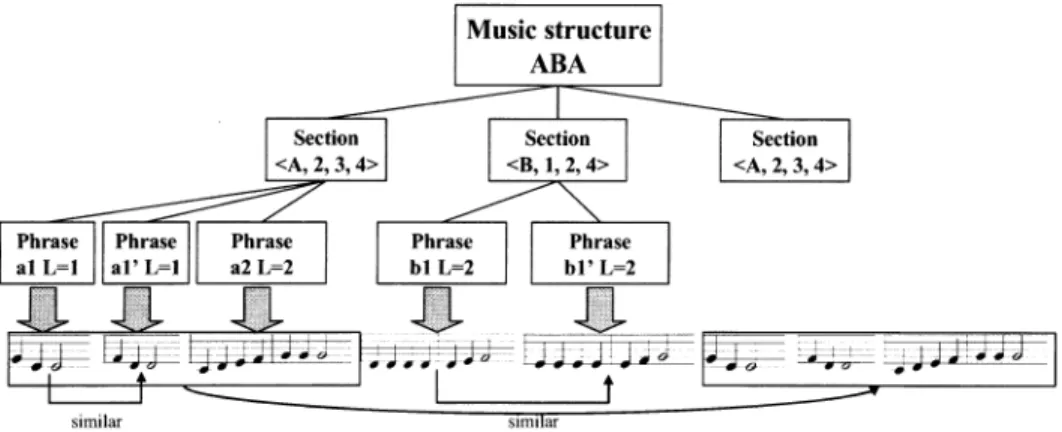

Music structure can beregardedas ahierarchicalstructure similar to the structureofanarticle. Inourapproach,a music

object iscomposed of sections andasection iscomposedof one or more phrase. The structure analysis component discovers thesection-phasehierarchicalstructureofamusic object while the structure learning component mines common characteristics from structures of several music objects.

Therearefivestepsforthe musicstructureanalysis.Inthe first step, pitch and duration information of each note is

extracted from the mainmelody. The main melody isanote

sequence where a note can be parameterized with several property values such as pitch, duration, velocity, etc.

Velocity is only consideredinmusic performance,therefore

only pitch and duration are considered for the structure

analysis.

Then, the repeating pattern technique is employed to

discover the repeating patterns ofpitch-duration sequence. There exist the algorithms based on suffix tree and

correlativematrix [6] todiscovertherepeatingpatterninthe

Given

nmusic objects

Music I Musicn

Music I 'schord sequencc Musicn'schord scqukncc

1.Melodyfeature I..I

extraction

2.Melodyfeature

|Chord

set i mset Strinrepresentation

Chordsettable Bi-2ramsettable String table

ID Transaction ID Transaction Transaction

<C, G,G,C> I JC,G J 1 {(C,G), (G, G), (G, C)} I <c G,G C>

<C, Am, Dm,G> mC,Am, G} 2 {(C,Am), (Am,Dm), (Dm,G)} 2J<Am,Dm,G>

3.Melodystyle mining

Melodystylemodel {<C, G, C>,

{C, G}, {(G,

C), (C,Am)},

...IFig.4.Flowchart ofmelody style mining.

respectively.

After the repeatingpatternminingprocess, amusicobject

maycontainmorethanonerepeatingpattern. Eachrepeating pattern appears as several instances. Figure 3 shows an

example of the instances after repeatingpattern mining on

music "Little Bee."Inthisfigure,astrip denotesaninstance of non-trivialrepeatingpattern abbreviates NTRP. Thereare

six non-trivialrepeatingpatterns.

A B C C B NTRP 5 NTRP1 NTRP 2 NTRP3 NTRP4 NTRP 5

Fig. 3. Anexampleof the instances afterrepeatingpattemminingonmusic

"~LittleBee."

Not all instances of

repeating

pattern

arerequired

foranalysis,

thereforewehavetoselectappropriateinstances.Inour

approach,

firstly,

all the instances of therepeating

patterns

withlength

shorter than two bars are filtered out.Then we transfer the

problem

into the exonchaining

problem

in Bioinformatics[7].

We wish to find the set ofnon-overlapping repeating

pattern

instances such that thetotal

length

of the selected instances is maximized.Given a set of

weighted

intervals in a chain, theexon-chaining problem

tries to find a set of maximumweight

ofnon-overlapping

intervals. Thisproblem

can besolved

by dynamic programming.

We canmodify

thisalgorithm for the pattern selection problem by replacing weight of interval with duration. Figure 3 shows the discovered repeating patterns and corresponding instances of the music "Little Bee." The five circled instances are

selectedbythe selection algorithm modified from the exon

chainingalgorithm.

Each ofthe selected instance therefore corresponds to a section. We labeled each selected instances such that the

instances ofthe samerepeatingpattern arelabeled withthe same symbol. For the example of Figure 3, the labeled

sequencebecomesABCCB.

Thenextstepof musicstructureanalysis istodiscover the phrase structure for each section. We use the approach of LBDM (Local Boundary Detection Model) developed by Cambouropoulos etal.[1]tosegment asectionintophrases. LBDM extracts the pitch interval sequence, the inter onset

interval sequence and reset sequence from main melody. These three sequences are integrated into the sequence of boundary strength values measured by the change rule and the proximity rule. Peaks of the boundary strength value

sequence aretherefore the boundaries ofsegments.

The structure analysis component outputs a section

sequence where the section is parameterized by label, occurrence, numOfPhrase and length. Attribute label

denotes which label it is. Attribute occurrence denotes the

number of appearances of the same label. Attribute numOJPhrasedenotesthe number ofphrasesinthissection. Attribute length denotes the length of the section. In the

learning step, the statistical analysis of the set of section

sequences is derivedtocapture thecommonpatterns ofthe

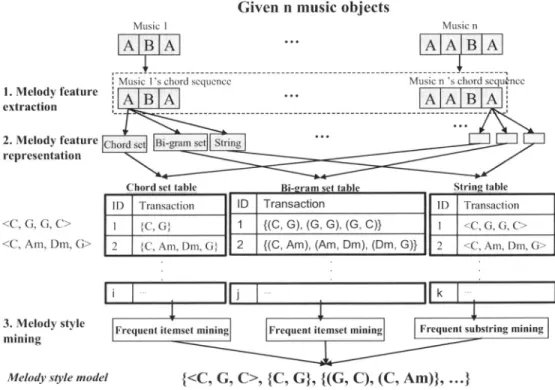

4.2Chord Analysisand Melody Style Rule Learning After the analysis of the music structure, the melodies are segmented into sections. The segmented melodies are collected for music style mining. We have proposed the music style mining technique to construct the melody style model [11]. As Figure 4, there are three steps forproposed melody style mining technique, melody feature extraction, melody feature representation, andmelody stylemining. The basic idea is to extract the chordsaccompaniedwithmelody as the feature ofmelody. A chord is a number ofpitches soundedsimultaneously. The chord assignmentalgorithmis based on the harmony theory. A detailed algorithm canbe referred tofrom ourpreviouswork[11]. After determining thechord, the feature ofamelodycanberepresentedas aset

ofchords, a setofbi-grams anda sequence of chords. For

instance, a chord sequence is<C,G, G, C>. This sequence represented by set of chord is {C, G}, represented by bi-gram of chord is {(C, G), (G, C)}, represented by sequence of chord isstill<C, G, G, C>.

To obtain the hidden relationships between chord and musicstyles,weemploy miningmethods with respecttothe threerepresentationsofmelodyfeature. If therepresentation ofmelodyfeature is a setof chordsor a setofbi-grams of chords, frequent itemsetmining algorithmisutilized. If the feature of themelodyisrepresentedasasequence ofchords, frequent substring mining algorithm modified from the sequential pattern mining is employed. The discovered frequent patterns in terms of chords constitute the music stylemodel.

)t*

. . . S >.; .. ;;; ji i ...::;.:0.:;:.:E.:.S... v ;;: W+-00...

M~~~~~~P

(2)

variations. The first segment rounded by the block is the original motif, and the following segments are the developments of original motif. We have modified the repeat pattern algorithms based on the development of motif to discover themotives [5].

The motif selection model describes the importance of motifs. Let

Freq,t,nm,si

denotes the frequency of a motif m appearing in music object music. We normalize the formulasasequation 1 and denote it asSupport(m,music). For a motif

min the givensetof given music object DB,wesum upits support and denote it as ASupport(m,DB). Finally, we normalized the ASupport as equation 3 and denoted it by NSupport(m,DB), where Min(DB) and Max(DB) represent the minimum andmaximumASupport of the motif in DB.

Suipport(m, music)=FreqM'Musi/ I Freqmotif,music /Vrnotifumusic

ASupport(m, DB)= ESupport (m, am)

VamcDB

NSupporfm, DB)=(ASuppor(m,DB)-Min(DB)+l)/(Ma4(DB)-Min(DB)+1)

(1)

(2)

(3)

Melody Chord

Music

Fig.6.Flow chart of musicgeneration.

(3) rkW ---NI Ms

£.___

__w______________^

/ AStr

it-__

=t=t:=:=J==twt

.s

W6ffls.

=_i___

NI' __) ...Fig.5.Examplesofthedevelopment ofmotif:(1) Repetition, (2) Sequence, (3)ContraryMotion,(4)Retrograde,(5) Augmentationand Diminution.

4.3MotifMining andMotifSelectionRule Learning A motif is a reoccurring fragment of notes that may be

used to construct the entirety orparts of theme. Based on

musictheory, there are several waysfor developing a motif. The major ways of the motif development are repetition, sequence, contrary motion, retrograde, augmentation and diminution.Figure5 showsthese fivedevelopments ofmotif

V. Music GENERATION

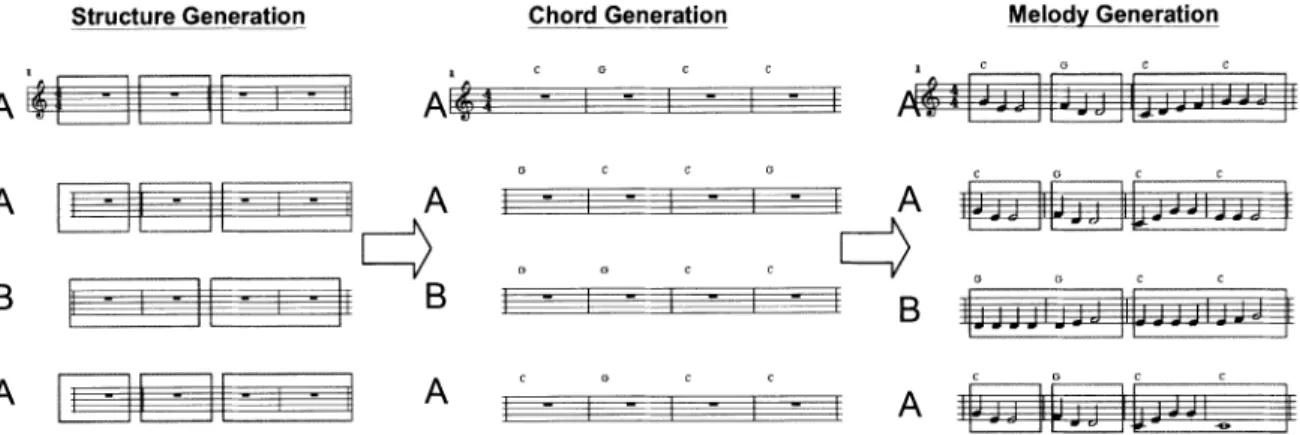

In this section, we discuss the method used to generate music from the three models constructed in the previous steps. The flow chart is showedin Figure 6, andFigure 7

demonstratesanexampleof musicgeneration.Accordingto

probabilistic distribution in the music structure model, the system generates the music structure expressed as a sequence of sections. For each section in the section sequence, the heuristic algorithm, Phrase-Arrangement showninFigure 8,decomposes the section into one or more variable-length phrases for constitution of the second level

structure.

Then the chordgeneration component generates the chord for each bar based on the music style model. As stated in section 4.2, the music style model consists of the frequent patternsintermsofchords. Thechord generation component randomly generates several chord sequences. The more patterns of the music style model contained in a randomly generated chord sequence,thehigher the score ofthis chord

Structure Generation ChordGeneration I C A c C Al4 - - -rwgI MelodyGeneration FTfl

rTfl.

FTTTTTTiHAVEl

1.; A A ZIIB

.1B

AL~~~r

A

A

G C C G - - -t; t) c C v G _,G C _ C .1 .1 - li 1HB1

4=

- - - noI_fu

-.1 :^:z~~~~~~1A

1 11ii

-Fig. 7. Anexample ofmusic generation.

Algorithm Phrase-Arrangement

Input: thelength of the section(sLength)and thenumber ofphrase in this section(numLength)

Output: phrase arranged

1) initialize allpLength in this sectiontozero

2) i=0

3) avePhrase=sLength/numPhrase

4) e=arg

mini2m

-avePhrasel

5)

6) 7) 8) 9) 10) 11) 12) 13) pLength 2while(sLength-pLength>0)or( numPhrase 1)

phrase[i].pLength=pLength

60%setisMotivic istrue,40%isfalse sLength=sLength-pLength

numPhrase numPhrase-1

i++

phrase[i]

=sLengthexceptthe firstphrase, setparameterisVarianceof allphrase with thesamelengthto true

Fig. 8. Phrase-Arrangement algorithm.

sequence. The chord sequence with the highest score is

assignedtotherespective bar.

After thestructure andchord informationaredetermined, the melody generation component works as follows. For each phrase, the melody generation component selects a

motif from the motif selection model. In general, the duration ofa motifis shorter than that ofa phrase. The

selected motifis developed (repeated) based on the major waysofmotifdevelopmentmention in section4.3.

To ensure that the developed sequence of motives is

harmonic to the determined chordsequence, an evaluation

functionisemployedtomeasuretheharmonization between

a motif sequence and a chord sequence. This evaluation functionis, infact,theinversefunction ofchord-assignment algorithmmentioned insection4.2. Inmelody style mining, givenamelody, the chord-assignmenttries to find the best accompaniedchordsequence.Here,givenachordsequence,

the evaluation function tries to find the best accompanied motifsequence.If thedevelopedmotifsequenceisevaluated

to be disharmonious, the melody generation component

selects another motif from the motif selection model and develops the motif variation. Thisprocessisrepeated untila

harmonic motifsequenceisproduced.

Note that, from the music structure pointof view, some

sections are associated with the same label. An example

shown in Figure 9 is the section one and three. These two

sectionsareallassociated with label "A." For those phrases contained in the repeated section, the motifsequences are

simplyduplicated from the motifsequencesgenerated in the

phrases ofprevioussection of thesamelabel.

Finally, the melody generation component concatenates

the motif sequences along with the corresponding chord sequencestocomposethemusic.

VI. EXPERIMENTS

To evaluate the effectiveness of the proposed music generation approach,two experimentswereperformed. One

experiment is the discrimination test fordiscrimination the machine-generated music from human-composed music. Theotherexperimentistotestwhether the music style of the generated music is similartothat ofthegivenmusicobjects. It is difficult to evaluate the effectiveness ofa computer

music composition system because the evaluation of effectivenessinworks ofartoftencomesdowntoindividual subjective opinion. In 2001, M. Pearce addressed this problem and proposed a method to evaluate the computer

musiccompositionsystem[10]. Here, weadopt this method

todesignourexperiments.

In the firstexperiment, the performance of the generated musicobjectwastestedby theapproach similartotheTuring Test.Subjectswereaskedtodiscriminate between the music composed bycomposer andthatgeneratedbytheproposed system. Our system was considered to succeed ifsubjects cannot distinguish the system-generated music from the

human-composed music. There were 36 subjects including

four well-trained music experts. The prepared dataset consists of 10 system-generated music objects and 10 human-composed music objects. The human-composed music are "Beyer 8", "Beyer 11", "Beyer 35", "Beyer51",

"Through All Night", "Beautiful May", "Listen to Angle Singing", "Melody", "Moonlight", and "UptoRoof."

A

H

v

c G c c

III H j

Musicstructure MAB |Section SectionSeto

<A,2, 3,4>|<B,1, 2,4> <A|,3 |Phrase ||Phrase || Phrase Phrase || Phrase

|alL=1 |al'

LAl|

a2 2 |blL=2 ||bl' L=2|similar mla

Fig.9. Anexampleofmelodygeneration. These music objects are allpiano music containing melody

and accompaniment. These 20 music objects were sorted randomly anddisplayed tosubjects. Subjects wereasked to

listen each music object and answer whether it is system-generated or human-composed music. The proportions ofcorrectlydiscriminated musicwere calculated fromthe obtained result(Meanistheaverageoftheaccuracy). The significant testis performed with the one-sample t-test

against 0.5 (the expected value if

subjects

discriminated randomly).TABLE I.

THERESULT OFTHEDISCRIMfNATIONTEST

Mean SD DF t P-value

Allsubjects 0.522 0.115 35 1.16 0.253 Allsubjects 0.503 0.106 31 0.166 0.869 except experts

SD:the standarddeviation,DF:thedegree offreedom,t: t statistic.

The result of theexperimenttestis shown in TableI. The result shows that it is difficult to discriminate the system-generated music objects from the

human-composed

ones. All subjects (including experts) have little higher discrimination because some of them possess well-trained musicbackground.

In the second experiment, we trytoevaluate whether the musicstyleof thesystem-generatedmusic is similartothat of the given music. We demonstrated our system in the web

page,http:/avatar.cs.nccu.edu.twx

'-tcvechiu/crns/expenment

,Inde.c i,forsubjects.For each round of musicgeneration, subjectswere askedtogive the score, from 0to3,todenote thedegree they subjectively feel, fromdissimilartosimilar. Each subject repeated three times. There were totally 31 subjectstoperformthis test. Themeanof thescore is 1.405

andstandard deviationis 0.779.

VII. CONCLUSIONS

Inthis paper, we proposeda new framework for a music

compositional system. Datamining techniques were utilized to analyze and discover the common patterns or

characteristics of music structure, melody style and motif from the given music objects. The discovered patterns and

characteristics constitute themusic structure, themelodystyle, and the motif selection model. The proposed system

generates the music based on these three models. The experimental results show that the system-generated music is

not easy to be discriminated from the human-composed music.

REFERENCES

[1] E.Cambouropoulos, "The LocalBoundaryDetection Model (LBDM) and its ApplicationintheStudyofExpressiveTiming," Proc. of the InternationalComputer Music Conference,ICMC'01,2001. [2] D. Cope, "Computer Modeling of Musical Intelligence in EMI,"

ComputerMusicJournal, Vol. 16,No.2, 1992.

[3] S. Dubnov, G. Assayag, 0. Lartillot and G. Gejerano, "Using Machine-Leaming Methods for Musical Style Modeling," IEEE Computer, Vol. 36, No. 10, 2003.

[4] M. Farbood, "Hyperscore: A New Approach to Interactive, Computer-Generated Music," MasterThesis,Department ofScience in Media Arts andSciences, MassachusettsInstitute ofTechnology,USA, 2001.

[5] M. C. Ho, "Theme-basedMusicStructuralAnalysis,"MasterThesis, Department of Computer Science, National Cheng Chi University, 2004.

[6] J. L.Hsu,C. C.Liu andChen,A. L.P.,"EfficientRepeatingPattem Finding in Music Database," In Proc. ofIEEE Transaction on Multimedia,2001.

[7] N. C. Jones and P. A. Pevzner, "AnIntroduction to Bioinformatics Algorithms," TheMITPress,2004.

[8] Y. Marom, "Improvising Jazz withMarkov Chains," Ph. D. Thesis, Department of Computer Science, Westem Australia University, Australia,1997

[9] E. R.Miranda, Composing Music withComputers,Focal Press, 2001. [10] M. PearceandG.Wiggins,"TowardsAFrameworkforthe Evaluation

of Machine Compositions," In Proc. ofAISB'01 Symposium on Artificial IntelligenceandCreativityinthe Arts and Sciences, 2001. [11] M. K.Shan andF. F. Kuo,"Music Style Mining and Classification by

Melody,"IEICE Transactions on Information and System, Vol.E86-D, No.4,2003.

[12]B.Thom,"BoB: AnImprovisationalMusic Companion," Ph. D. Thesis, DepartmentofComputerScience,Camegie Mellon University, USA, 2001.

[13] A. L.Uitdenbogerd and J. Zobel, "Manipulation of Music For Melody Matching,"InProc.ofACMInternationalConference on Multimedia, MM'98, 1998.