KNOWLEDGE LEARNING ON FUZZY EXPERT

NEURAL NETWORKS*

H.C. Fu, J.J. Shann

Department of Computer Science and Information Engineering

Flsiao-Tien Pao

Department of Management Science

National Chiao-Tung University,

Hsinchu, Taiwan 300,

R.O.C.

Abstract

The proposed fuzzy expert network is an event-driven, acyclic neural network designed for knowledge learning on a fuzzy expert system. Initially, the network is constructed according

to a primitive (rough) expert rules including the input and output linguistic variables and

values of the system. For each inference rule, it corresponds to an inference network, which

contains five types of nodes: Input, Membership-Function, AND, OR, and Defuzzification Nodes. We propose a two-phase learning procedure for the inference network. The first phase is the competitive backpropagation (CBP) training phase, and the second phase is the rule-prziriing phase. The CBP learning algorithm in the training phase enables the network to leant the fuzzy rules as precisely as backpropagation-type learning algorithms and yet as quickly as competitive-type learning algorithms. After the BP training, the rile-pruning process is performed to delete redundant weight connections for simple network structures

and yet comp atible ret rieving performance.

1 Introduction

Recently. there are more and more research on implementing fuzzy expert systems in neural networks for the advantages of learning fuzzy expert rules from examples [7, 3, 2, 5, 8J. Most of the neural networks methodologies for learning knowledge can be divided into two categories:

back propagation —type and cornpetitive—type. Roughly speaking, backpropagation-type

learn-ing algorithms learn more precisely thami competitive-type algorithms because they are based on gradient descent search, thus they take a long time and numerous training epochs to converge.

*This research is supported in part by the National Science Council under Grant NSC82-0408-E009-427.

In contrast, competitive-type learning algorithms learn much rapidly than backpropagation-type algorithms because they are based on unsupervised clustering, hence its the knowledge learning may not be precise enough. Therefore, an important issue that remains to be resolved in this field is how to learn knowledge both precisely and rapidly.

in this paper, we propose an event-driven, acyclic neural network for knowledge learning on

a fuzzy expert system. The fuzzy rules considered are in the linguistic IF-THEN form. The IF-part, i.e., the antecedent, of a rule is the conjunction of several input linguistic variables [ 12}, each associated with a linguistic value. The THEN-part, i.e., the consequence, of a rule contains only one output or intermediate linguistic variable associated with a linguistic value. The proposed learning procedure of this network consists of two phases. The first phase is a

competitive backpropagation (CBP) training phase, and the second phase is a rule-pruning phase. The CBP learning algorithm in the training phase is designed to be a compromise between the advantages of backpropagation-type and competitive-type learning methodologies. After the CBP training phase. a rule-pruning process is executed to delete redundant weight connections and to better represent the inference rules.

This paper is organized as follows. The structure of the network and the functions of the nodes in the network are described in Section 2. The CBP learning algorithm is stated in Section 3. A pruning method for deleting redundant weight connections is described in Section 4. In Section 5, a simple exemplar problem is simulated and analyzed. Finally, conclusions and future research plans are presented in Section 6.

2 Network Structure and Node Functions

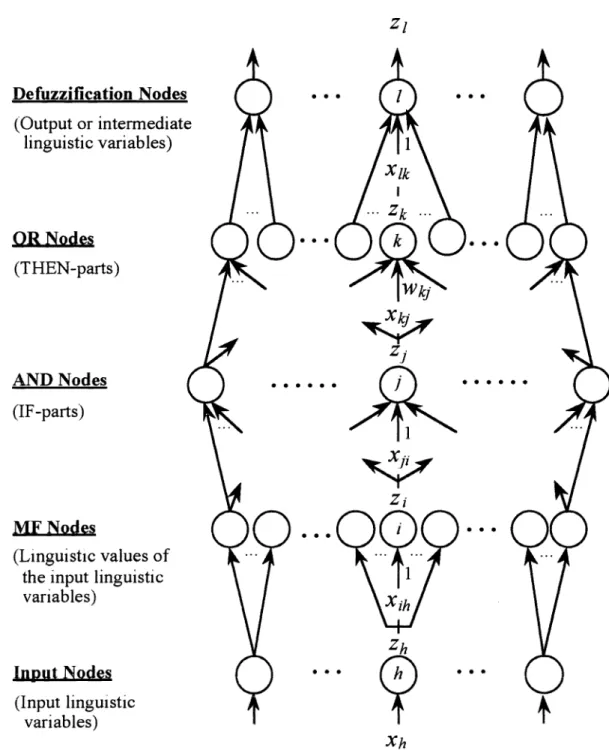

in general, the inference structure of a fuzzy expert system can be classified into two categories: ( 1 ) single level of inferences, and (2) multi-level of inferences. A single level inference expert system

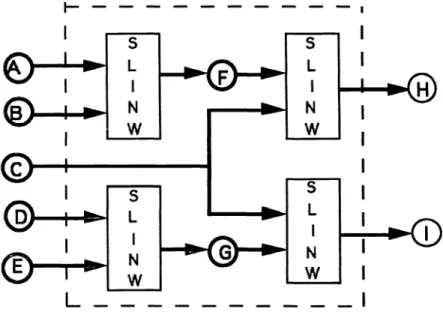

can be constructed by a five layered neural networks, and the inference knowledge can be learned from examples. The network structure for single-level inference as shown in Figure 1. Knowledge learning for single-level inference system have been presented in [9, 11]. For multi-level inference expert system, outputs of a defuzzification function can be inputs to a membership function of another inference rules. implementing a multi-level inference system on neural networks, requires training examples and primitive knowledge of inference relations (rules). For instance, suppose an expert expert system is composed by the following rules:

Rulel: if A and B then F,

Rule2: if D and E then G.

Rule3: if C and F then H,

Rule4: if C and G then I.

Without knowing primitive inference relations, a neural expert system can also be constructed

by a single level inference structure, and training by many examples. Obviously, the resulting

network will containing more connections and requires more training iterations to converge the

(Output or intermediate

linguistic variables)

MF Nodes

(Linguistic values of

the input linguistic variables)

Input Nodes

(Input linguistic

variables)

Figure 1: The structure of a fuzzy expert network for single-level inference. Defuzzification Nodes

zi

OR Nodes (THEN-parts) AND Nodes (IF-parts)S...

S..

. S S Xh 164 ISPIE Vol. 2243learning process. This paper will focus on the construction and training of multi-level inference expert networks. First, according to the primitive inference relation, an inference flow diagram

can be constructed (see Figure 2). Based on the inference diagram, we can apply the

single-SLINW: S ingle Level Inference Network

Figure2: The structure of a fuzzy expert network for multi-level inference,

level inference structure to implement each individual inference rules. As proposed in [9, 1 1] ,the network contains five types of nodes: Input, Membership-Function, Fuzzy-AND, Fuzzy-OR, and Defuzzification Nodes. The semantic meaning, connectivity, and function of each type of nodes

are described as follows:

Let P be the set of the indices of the nodes each of which has its output link connected to node i. The semantic meaning, connectivity, and function of each type of nodes in the proposed network

are described as follows:

e Input Nodes:

Each input node of the fuzzy expert network represents an input linguistic variable of the

fuzzy expert system, and is used as a buffer to broadcast the input to the

membership-function nodes of its linguistic values.

o MF (Membership-Function) Nodes:

Each MF node represents the membership function of a linguistic value associated with an input or an intermediate linguistic variable. An MF node has one input link emitted from an input node or from a defuzzification node. The output link of the MF node are connected to the AND nodes which represent the IF-parts containing this linguistic value of the linguistic

variable. For each input or intermediate linguistic variable, there is riMF nodes, where

SPIEVol. 2243 / 165

I I

ii is the number of linguistic values associated with the linguistic variable. The output of an MF node is in the range of [0, 1] and represents the membership grade of the input or

intermediate linguistic variable with respect to the membership function of a linguistic value

of the variable.

In general, the most commonly used fuzzy-set membership functions are in the shape of trapezoid, triangle, or bell. For bell-shaped membership functions, the operation of an MF

node i with an input link emitted from an input or defuzzification node h is defined as

follows:

(xjh—ct)2

zi

=

exp 2o2 (1)where cjis the centroid, a2 is the variance, Xih = zh, and zh is the output value of node h. The weight of the input link of an MF node is unity.

0

AND

Nodes:

Each AND node represents an IF-part for the possible rules of the fuzzy expert system.

An AND node has several input links each of which is emitted from an MF node for the linguistic value of an linguistic variable containing in the IF-part. The output link of the AND node is connected to all the OR nodes which represent the THEN-parts of the fuzzy rules with this IF-part.

In fuzzy set theory, the most commonly used operator for fuzzy intersection is the

mm-operator suggested by Zadeh [13] . Therefore, the operation performed by an AND node j is defined as follows:

zi = rnin(x) = MIN3 ,

(2)iEP3

where

= z.

Theweights of the input links of the AND node, w's, are unity.0 OR

Nodes:

Each OR node represents a THEN-part for the possible rules of the fuzzy expert system. The

operation performed by an OR node is to integrate fuzzy rules with the same consequence. An OR node may have several input links each of which is emitted from an AND node, and its output link is connected exactly to one defuzzification node which represents an output or an intermediate linguistic variable. The weight Wkj of the link connected from an AND

node j to an OR node k represents the weight of a fuzzy rule which regards node jas the

IF-part and node k as the THEN-part, respectively.

In fuzzy set theory, the most commonly used operator for fuzzy union is the max-operator suggested by Zadeh [13]. Therefore, the operation performed by an OR node k is defined as

follows:

Zk = rnax(xkwk) = MAXk,

(3)JEPk

where Xkj = z3. The weights of the input links of an OR node, Wkj'S, are learnable positive real numbers.

0 Defuzzification

Nodes:

Each defuzzification node represents either an intermediate linguistic variable or an output

linguistic variable, and performs the defuzzification concerning all the linguistic values of the

linguistic variable. A defuzzification node has several input links each of which is emitted

from an OR node. The output link of a defuzzification node may be connected to the

MF nodes of its linguistic values when the defuzzification node represents an intermediate linguistic variable, or may be one of the outputs of the network when the defuzzification node represents an output linguistic variable.

Suppose that the correlation-product inference and the fuzzy centroid defuzzification scheme

[ 4] are used. The function of a defuzzification node 1 is defined as follows: — EkEpl(xlkakck)

zi— , (4)

>kEP1 (xlkak)

where ak and Ck are the area and centroid of the membership function for a linguistic value

of an linguistic variable in the THEN-part represented by OR node k, respectively, and

Xlk Zk. For a bell-shaped membership function, ak =

\/cYk,

where ok is the variance of the membership function. The weights of the input links of a defuzzification node are unity.3 The Competitive Backpropagation Learning

Algorithm

We propose a two-phase learning procedure for our fuzzy expert network. The first phase is a

competitive backpropagation (CBP) training phase, and the second phase is a rule-pruning phase. The basic concept of the BP learning algorithm [9, ii] is to choose competitive operations, e.g., Eq.(2) and (3) , for the nodes in a neural network and to train the network by the process of the

backpropagatiort learning algorithm.

In the first phase of the learning procedure, the process of backpropagation learning method is performed to minimized the error function: E = (T1 —z1)2, where M is the number of output

linguistic variables, and T1 and zi are the target and the actual output values of a defuzzification node I which represents an output linguistic variable. Since the proposed fuzzy expert network

is acyclic, it is guaranteed that the network has stable forward activation and backward error

propagation [6].

Let N be the set of the indices of nodes each of which has an input link emitted from node i. The definition of the Delta values for OR, AND and MF nodes, the gradients of E with respect to the learnable weights, and the adjustments of learnable weights are described as follows:

o The definition of Delta values:

For a defuzzification node 1, the definition of the Delta value, i, is defined as follows:

5E

Ol =

ozi

— J — (T1 —z1) ,

if

node 1 is an output linguistic variable, 5—

— {>IiEN1{8i z(zL_c$)]} if it is an intermediate linguistic variable .

For an OR node k, the definition of the Delta value is defined as follows:

ÔE ÔE az1

k —

— ——

ôZk ôz1 3zk

=

'5 0k(Ck _z1), (6)

>1k'eP1 (Xjk'crkl)

where the output link of node k is connected to defuzzification node 1 only. For an AND node j, the definition of the Delta value is defined as follows:

a

3=

9z

aZk ôz3 — c-' 6 Wkj ,if

XkjWkj MAXk,

— kENk j

, otherwise . (7)For an MF node i, the definition of the Delta value is defined as follows:

— 8E

_

9E 9z3 I9z

ôz3 ôz,=

';-'

..

I

1 ,ifx=MIN3

, (8 L_s 3 [ o , otherwise JEN1o The gradient of E with respect to the weight of a fuzzy rule:

For the weight Wkj of a link connected from AND node jtoOR node k, the gradient of E

with respect to Wkj is evaluated as follows:

VE -

Wk —__

—__

OWj OZk OWk3

—

f X , if

XkjWkj =M AXk,— (9)

1 0 , othcr'wzse.

o The adjustment of a learnable weight:

The adjustment of a learnable weight Wkj, which is based on the gradient descent search,

can be described as follows:

wk(t + 1) = wk(t)—

/3 VEWkJ,

where i3 is the learning rate.

The knowledge of the fuzzy rules learned by the proposed CBP algorithm is distributed over the weights of the links between AND and OR nodes.

4 The Rule-Pruning Method

In general, after an idea fuzzy rule learning, a sound rule base is expected to be obtain. A sound

rule base is defined as that for an output linguistic variable, at most one consequence can be

implied from each possible antecedent in such a rule base. However, since the knowledge of fuzzy rules learned by the CBP training is distributed over the weights on the input links of OR nodes, there may be rules with identical antecedents and output linguistic variables but with different output linguistic values. Therefore, a pruning method is required to determine which rule in such

a group of rules should remain while the others are pruned to form a sound rule base.

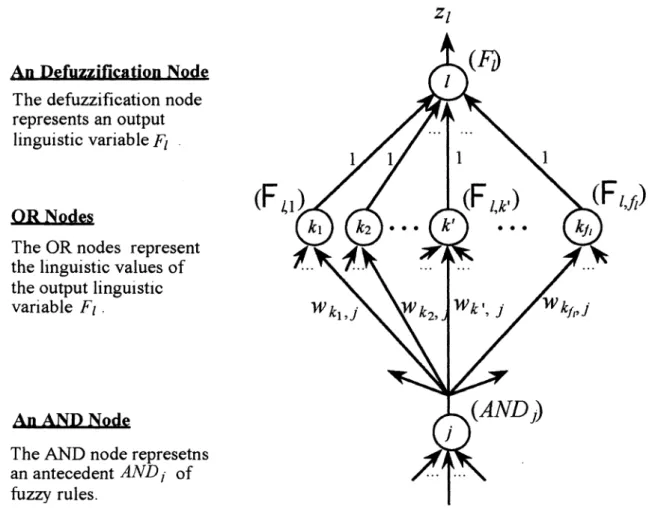

The physical meaning of the weights on the input links of OR nodes is explained in the

following. After the CBP training, the learned weights Wkj on a set of links from an AND node j to the OR nodes of an output linguistic variable F (see Figure 3) are interpreted as the weights ( or certainty factors) of a set of fuzzy rules which contain the same IF-part and are related to the same output linguistic variable with different linguistic values.

An Defuzzification Node The defuzzification node represents an output

linguistic variable F1

(F

1,f)OR Nodes

The OR nodes represent the linguistic values of the output linguistic

variable F1

An AND Node

The AND node represetns an antecedent AND1 of

fuzzy rules.

Figure 3: The diagram of the possible fuzzy rules with identical antecedent ANDJ for an output

linguistic variable F1.

As an example, Figure 3 can be interpreted as the following fuzzy rules:

SPIEVol. 2243 / 169

zi

(F

1

R,i,1: If AND3, then F1 is (wk1,3)

R,1,2: If AND, then F1 is J,2• (Wk2,)

R,l,k,: If AND3, then F1 is (wk,,3)

R,l,f1 : If AND3 , then F1 is .

(Wk11

,)

where AND is the antecedent represented by an AND node j. Theeffects of these rules are that when the antecedent AND3 holds, each of the rules is activated to a certain degree represented by the weight value (the certainty factor) associated with that rule.

For the output linguistic variable F1, at most one of the rules listed above could exist in a sound rule base. In the rule pruning phase, the fuzzy rules with identical antecedents and output linguistic variables are combined into one rule. Then, the redundant rules are deleted.

An evaluation equation is proposed here based on the concept of the centroid of gravity, which is also the basis of the defuzzification scheme described in Section2. The centroid of these rules is evaluated according to the areas and centroids of the membership functions (of the linguistic values for the output linguistic variable) and the weights of these rules.

The equation for determining the remaining rule in the group of fuzzy rules represented by the links from an AND node jto the OR nodes of an output linguistic variable F1 is defined as

follows [11]:

>1k(wkikck)

clj=

\

, (10)LkWkj°k)

where kE N3 and kE P1.

We divide the space of the output linguistic variable F1 into several nonoverlapped intervals corresponding to the membership functions of the linguistic values of F1. The computed value of C1 will be in one of the intervals. Then we prune all the other rules (represented by weight connections) by setting their weights equal to zero.

For each AND node, the pruning process is performed to delete its redundant output links connected to the OR nodes associated with an output or intermediate linguistic variable. After the pruning phase, a sound rule base is obtained.

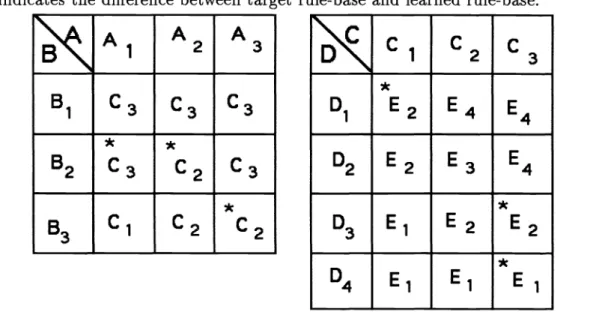

5 Simulation and Comparison

A general purpose simulator of the proposed fuzzy expert network has been implemented. Several

simple fuzzy expert systems were simulated to observe the learning ability of the fuzzy expert network. For an exemplar fuzzy expert system, there are five linguistic variables A, B, C, D, and

E. Their linguistic values are defined as:

1. A has three linguistic values A1, A2, and A3. 2. B has three linguistic values B1, B2, and B3.

3. C has three linguistic values C1 , C2, and C3.

4. D has four linguistic values D1, D2, D3, and D4. 5, E has four linguistic values E1, E2, E3, and E4.

The inference relationship between these variables is: 1. C is inferred from A and B, and

2. E is inferred from C and D.

Therefore, A, B, and D are input linguistic variables, C is an intermediate linguistic variable, and E is an output linguistic variable. The target fuzzy rule bases are shown in Table 1.

Table 1: The target rule base for the exemplar problems

A,

A2

A3

B1C3

3

C3

2

2

C3

C3

B3 C1C2

C3

1

c2

c3

D1E3

E4

E4 D2E2

E3

E4

D3 E1E2

E3

D4 E1 E1E2

From the simulation results of this fuzzy expert system, after 220 CBP training epochs, the error rate was about 6%. Then the pruning process was performed to delete the redundant links

with a slightly increased error rate, 7%. Table 2 shows the learned rule-base for the exemplar

fuzzy expert system.

6

Concluding

Remarks

A fuzzy expert network for rule learning of a fuzzy expert system is proposed and presented.

The learning procedure of the network is divided into two phases. The first one is a competitive backpropagation (CBP) training phase, and the second one is a rule-pruning phase. In the first phase, the CBP learning algorithm enables the network to acquire the knowledge of fuzzy rules

Table 2: The retrieved rule base after 220 CPB learning epochs, and rule-pruning processes. The *

symbolindicates the difference between target rule-base and learned rule-base.

A1

A

2A

3C3

C3

C3

B2*

C3

*

C2

C3

B3Cl

C2

*

C2

1

2

3

D1E4

E4

D2E2

E3

E4 D3 E1E2

*E2

D4 E1 E1quickly and precisely. The main reason for these advantages is that the gradient descent search approach in the CBP algorithm enables the network to learn more precisely than typical com-petitive learning algorithms, while the dedicated structure of the network and the comcom-petitive characteristics of the CBP algorithm enable the network to converge much more rapidly than conventional backpropagation learning algorithms. The knowledge of fuzzy rules learned in the

CBP training phase is distributed over the learnable weights of the network. Therefore, in the second phase of the learning procedure, a pruning process is performed to convert the distributed knowledge of fuzzy rules learned by the CBP training into itermizable rule bases.

In the near future, we plan to further study on the learnability of the parameters of membership

functions and on the choosing of the competitive operations performed by the nodes in a fuzzy expert network.

References

[1] H.C. Fu and J.J. Shann, "Fuzzy Expert Networks," in the Proc. of ICANN'93, Sep. 13-16,

1993. Amsterdan, Netherlands.

[2] S.l. Horikawa, T. Furuhashi, and Y. Uchikawa, "On Fuzzy Modeling Using Fuzzy Neural Networks with the Back-Propagation Algorithm," IEEE Trans. on Neural Networks, Vol. 3,

No. 5, pp. 801-806, Sep., 1992.

[3] S.C. Kong and B. Kosko, "Adaptive Fuzzy Systems for Backing up a Truck-and-Trailer,"

IEEE Trans. on Neural Networks, Vol. 3, No. 2, pp. 211-223, March, 1992.

[4] B. Kosko, Neural Networks and Fuzzy Systems: A Dynamic Systems Approach to Machine

Intelligence , Prentice-Hall, Inc., New Jersey, 1992.

ELI I EtZZ •IOi\ RIdS c96T

'ci-gii:

dd'

1°A'/tLQ

pun uoznwioJ'uj Azznj,.ipz

vii

[TJ cL6T 'O-1T7 dd '6i°A

'LcTO

dd '6Tg66T dd'

I°A 'ddud:ldS uoi]VULiofuJ'jjj

'jj

'j

rnuoj

'-ipz

-LILdDuoD

jo

i

qSiflUiJ

qii

pui

sj

suoqirjddy

o

iujixoddy

v•i

[ci] •3•O•'ll 'u%A:L'nqDUS

'66T

'-O

Q

rnoig

ivuowuJduifo

WfliSOdWfiS UO1!JV

1VJfldN'(66NNvsI)

piddi

-"is

v'-'

3H

'n

y,,

Azznjpunj

)OM

ioj

uuinbDy

iczznjT1T

o

q

rr

[

ii

.v.s.u

'uJo

'pU%TJOd '8661'cii

I

J1f

'6NN3h1

Jo

do4fdy

UI 'S)JJOMN iczzrLdjo

PTAOUMuhiinbDy

ioj

uurj

uoqidoidpi,,

'nj

3H

P'

"TS

fT

{o}10N

'ffS

'66T

'6i'

1cjnç 'ssALfiuo3 Pl0A'1 VStII UJLt! Jooj

u

ioj

pow.j

punj

Azznjj,,

'nj

3H

p"

uuq

cr

[6]66T

'LOIOT

dd'(86ivivoI)

lN

U0dU

-Juo3

/vuozwuuI

Jos5uzpdo4J

rn'uoidoJdpeH

ions

Azznj Aqsuoipunj

dnsiq

-UiJNpu

SJflJ

JOiUO

AZZnJ UiUJ%?JT10N

icZzflJ y,,'SflJ)J

}J pU? TDfl1?N G [s] 1661'ci

'T

°N 'OTD 1°A'

ndwo

uo'suaiJ

jrjjj

AzZrLdMorf

IUD

pu

UorrJ

p H-poA2N-p?JnoN,,