CAS 2000

-

IEE lnte atiqnal Sy o ium n ircuits andp y .

My28-31 Ipf%fjjpTv;$&

Perrormance AnaFysis an% WrcRi'Zecture va ua ion o

Codec System

Hao-Chieh Chang, Liang-Gee Chen, Mei-Yun Hsu and Yung-Chi Chang

DSP/IC

Design Lab, Department of Electrical Engineering

National Taiwan

University,Taipei, Taiwan, R.O.C.

Abstract

This paper presents various analyses of computational behavior, namely the number of datapath operations and memory access, on the core profile level 2 (CPL2) of MPEG-4 Video standard. These analyzed data exploit the load distribution and mode selection of the video system. The exploration of data-flow behavior and its derived computation of MPGE-4 video processing algorithms will then drive through an efficient architecture design.

I.

Introduction

In the recent years, visual communication becomes very popular and has attracted much attention of researchers as well as customers. Many advanced digital video standards, such as H.263 [

11:

MPEG- I[2]; MPEG-2 [3], make visual communication become more practical. Lately, rapid evolution of digital multimedia technology directs the multimedia communication service to provide more flexible and powerful functions. MPEG-4 [4] standard is undoubtedly the emerging standard for such multimedia communication trend. In comparison of MPEG- 1 and MPEG-2 standards, MPEG-4 standard video part (MPEG-4 Video) is not only to provide a high efficient video coding scheme for transmission but also to represent more active multimedia data with the capability of content-based interactive, universal access and error robustness.

In MPEG-4 Video standard, five different profiles are currently defined to support the coding of various video formats from QCIF to large size video (1920x1088) at a transmission rate ranging from several kbitsis up to 38.4 Mbits/s. Different algorithms and coding modes can be applied in different applications. This implies that high degree of flexibility and more computation power will be demanded for delivering MPEG-4 video. It could demand more processing capability than that today's processors can provide for real-time CPL2 applications or higher specification. A powerful computing engine with proper flexibility is thus required for those applications. Hardwire acceleration (coprocessor) provides a good solution to achieve better computing capability for video applications since data parallelism can be employed. In addition to applying the data parallelism technique, task-pipelining technique can be used by adopting the multithread architecture [ 5 ] .

This paper will discuss the computational behavior of MPEG-4 Video codec from the system point of view. Computational requirements and behavior of data transfer for a CPL2 MPEG-4 Video codec are analyzed first. Based on the analyzed results, design decisions for efficient codec architecture are derived in order to implement an efficient system architecture for MPEG-4 Video coding.

11.

MPEG-4 Video Codec.

MPEG-4 Video employs a tool-based coding scheme that can support various visual communication systems by utilizing different profiles and levels [6]. Such coding scheme allows MPEG-4 a better

adaptation to applications of different source data nature and any

possible channel environments. Besides, audioivisual contents from different sources can be coded with different scenarios (profiles): quality and dimensionality. This content-based concept allows scene

producers to create high flexible composed video scenes and allows users a high degree of interactivity with video contents. Each video object (VO) in a video scene can be divided into many time instances. called video object planes (VOPs). The individual VOP is allowed to have arbitrary shapes. As a consequence. the shape of an object has to be transmitted in addition to its texture. Figure 1 and 2 show the general video encoder and decoder structure for MPEG-4 separately. It is observed that the shape coding tool is provided in this structure beyond the conventional hybrid coding scheme.

I

I I

Figure 1 . General structure of MPEG-4 Video Encoder

F Shape information Shape Decodino

1

Motion M Y7

17;:7

Decoding Compensation Texlure VOP1

DBCOdlnQ Bullerpre 2 . General structure of MPEG-4 Video Decoder Due to the consideration of regularity, the implementation of MPEG-4 video Codec usually adopts macroblock (MB)-based coding scheme to support the coding of both rectangular video frames and arbitrarily shaped video objects. It is quite reasonable to employ the MB-based approach for conventional video frame coding. However, for the coding of arbitrarily shaped video objects, special processing is required to fit the MB-based approach. In the following, the essential processing for coding arbitrarily shaped video objects are briefly described.

A. Shape Coding

MPEG-4 video adopts the MB-based content-based arithmetic encoding (CAE: [7]) method and MB-based motion estimation to encode the binary shape information of video objects. For coding the shape of I-VOP; intra-mode CAE is applied for all the boundary blocks. While coding the shapes of P-VOP or B-VOP, however, before applying the inter-mode CAE, motion estimation for binary shape is performed to find the best matched macroblock such that inter-CAE could achieve higher compression performance.

B. Motion EstimationKompensation

&

Texture

Coding

0-7803-5482-6/99/$10.00 02000 IEEE

MPEG-4 video adopts a standard 8 x 8 or 16 x 16 pixels MB-based motion estimation and compensation algorithm to perform the temporal prediction coding for inter-frame video sequences. Moreover, several advanced prediction techniques, such as advanced prediction

(AP)

mode, overlapped block motion compensation (OBMC), unrestricted motion vector (UMV), can be optionally activated. Then, motion vectors and prediction errors are coded by VLC code. For arbitrarily shaped video objects, motion-repetitive padding is applied prior to ME for arbitrarily shaped objects. The texture information of intra-frames and prediction error of inter- frames are coded using the conventional 8 x 8 block-based DCT. In addition to the intra prediction of DC coefficients, advanced intra block prediction mode (DC/AC prediction) can be applied to remove the remaining redundancy between the DCT coefficients of neighbored blocks.111. Performance and Complexity Analysis of

MPEG-4 Video Codec.

MPEG-4 video codec involves complex data-intensive algorithms. Namely, a very large amount of data will be processed with some similar operations. For this: datapath computation capability and efficiency of data transfer will dominate the system performance of MPEG-4 video codec. In order to understand its computational behavior, we setup a computational model such that computation behavior of MPEG-4 Video codec can be modeled and analyzed. Besides, computer profiling is also employed as a reference for our behavior analysis. This run-time profiling has been performed on the MoMuSys Video FDIS Version 1.0 C implementation [SI. The analyzed results and discussions are describes as follows:

A. Behavior Model

In order to apply the model-based analysis on MPEG-4 video codec, a reference processor model has to be setup,

1-1

I

-+

T

Figure 3. Proposed basic compuatation model

As shown in Figure 3: basically it is a simple computation model composed of several function units, each which comprises a data processor (DP) and a memory module (local memory. LM and global memory, GM). The computational behavior can be modeled as memory access (data transfer between GM and LM) and data processing. Based on this model, we can divide the MPEG-4 video codec into several processing units, each that can deal with one major task of MPEG-4 video coding, such as DCT/IDCT: VLCNLD, MC, ME ... etc. After such a virtual machine has been setup, the computational behavior. i.e.: the number of data-path operations, memory access and memory-addressing operations can be calculated by directly analyzing the computing flow of algorithms.

Let us take the inverse quantization (IQ) tool for an example to show how to perform the proposed computational analysis. Figute 4 shows the IQ process adopted in MPEG-4. In the first step of IQ process, the Inverse Quantization Arithmetic. two different quantization methods can be used. Here the MPEG-like method is analyzed. The following

11-450

shows the formulas for the IQ procedure.

Mismatc Control Inverse Quantisation Saturatio Arithmetic quan-scale-code W[WI rvir~i

Figure 4. Procedure of inverse quantization defined in MPEG-4 standard

Inverse Quantization

(MPEG):

Saturation:

Mismatch control:

"=o .=o

F[vIu] = F' [ v ] [ u ] for all U , v except U = v = 7

I

F' U1171 if sum is oddF'[7][7]-1 if F'[7][7] isodd

F ' [ 7 1 [ 7 ] + 1 if F'[7][7]iseven

F[7X71

=I{

}

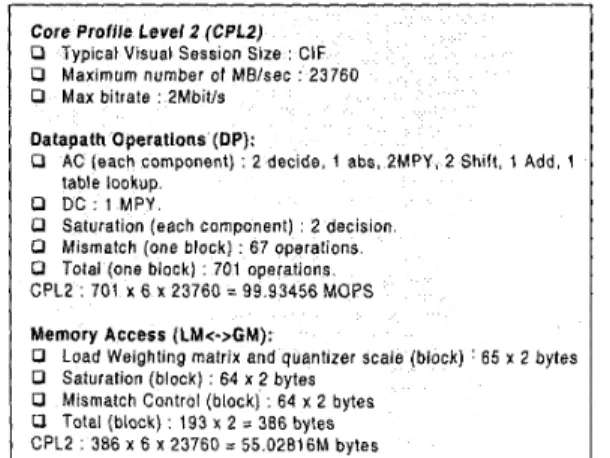

if sum is evenIn the inverse quantization step, each coefficient in an 8x8'block has to be checked if its value is equal to zero. If so: the reconstructed value is equal to zero, or further calculation has to be perfoimed. In the worst case analysis, the further calculation spends two data-path operations for decision, one for absolution: two for multiplication, two for shift, one for addition and one for table-lookup for each AC coefficient. Low frequency component (DC coefficient) is reconstructed by using one multiplication operation. In the second step, each pixel in the 8x8 block needs two more decision operations for saturation adjustment. Eventually, each block needs 67 extra operations for mismatch control in the final step. According to the above analysis, total data-path operations required by IQ process for CPLZ decoding are 99.93456 MOPS.

Then, we analyze the data transfer behavior while performing the method 1 of IQ process. A weighting quantization matrix (64 entries) and the quantizer scale, which are decoded by VLD processing unit, are loaded from GM. Assume the bit-width of these data is 16-bit: then totally it has to transfer 65 X 2 bytes to LM of IQ processing unit from GM. In Saturation, each component of the 8x8 block has to be

checked if it exceeds the legal range, so 64 x 2 bytes data are loaded from GM to LM (read) and 64

x

2 bytes data are stored back to GM (write). Such situation is the same with the Mismatch Control. In fact,the data leave in LM can be reused in these processing, which improves the performance of memory access. We will talk about this more in the section IV. Figure 5 summarizes the analyzed results of computational behavior of IQ process. Similarly, the other tools can be analyzed in the same way.

I I

Core Profile Level 2 (CPLZ) P Typical Visual Session Size CIF

P Maximum number of MBlsec 23760

P Max bitrate PMbitls Datapath Operations (DP):

0 AC (each component] 2 decide, 1 abs. 2MPY. 2 Shift, 1 Add, 1

0 DC 1 MPY

0 Saturation (each component) 2 decision

0 Mismatch (one block] 67 operations

0 Total (one block] 701 operations CPLZ

table lookup

701 X 6 X 23760 = 99 93456 MOPS

Memory Access (LM<->GM): 0 Saturation (block) : 64 x '2 bytes

0 Mismatch Control (block] : 64 x 2 bytes

0 Total (black) : 193 x 2 = 386 bytes

Figure 5 . Computational behavior of IQ method 1 based on proposed computation model

By employing this methodology, the computational cost can be easily estimated, including datapath operations and memory access. Besides, behavior of video coding algorithms becomes clear and easy to model. Thus: computational requirement of a real-time CPL2 MPEG-4 video codec could be determined based on this analysis. The results of computational analysis of MPEG-4 video codec are summarized in Table 1 and 2.

B. Run-time Profiling

The software run-time profiling of the non-optimized Momusys C implementation is performed on a general purpose RISC processor:

SUN UltraSparc2. GNU profiling tool, gprof. is employed to obtain the statistic of run-time information. In this non-optimized implementation, the sprial-search algorithm with SSDA [9] for motion estimation tool is adopted. It is simulated that the sprial-search with SSDA can achieve around 15%-3O% gain. However: this optimized search algorithm still dominates the computation of video coding. (can not real-time) It can be observed that this tool spends most of the execution time, about 85% or even higher. This implies that more efficient algorithms or architectures are required.

IV.

Implementation Consideration

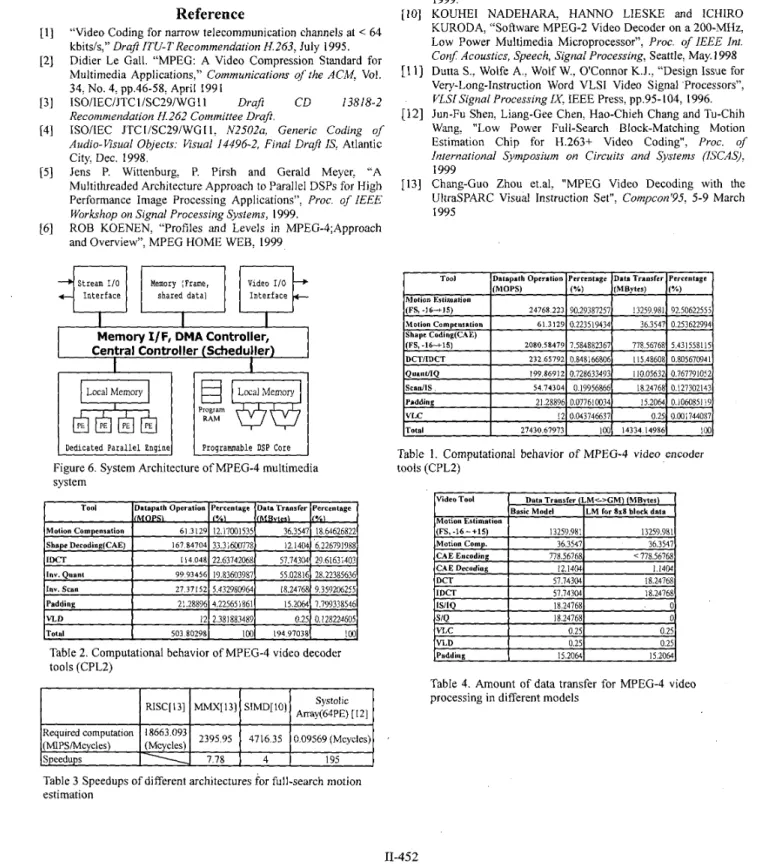

As mentioned before, programmability is strongly demanded for supporting various functionality as well as system parameters. Thus, software-oriented implementations should be more suitable for realizing a practical MPEG-4 system. Namely, employing programmable DSPs could be a feasible choice for real implementations. However, the computational requirements for MPEG-4 Video codec exceeds the capability that general-purpose programmable DSP processors can provide today. This strict requirement on computation leads to the development of very powerhl computing engines whose, performance is much improved by applying several advanced design concepts, such as SIMD [IO], VLIW [ 1 I], ... etc. In these design classics, parallel-computing techniques at various levels are employed in order to achieve higher computation performance. Besides: hardware acceleration (coprocessor) is another good choice for performance enhancement. In the near future, visual communication will become further functionality-rich. The need of high complexity algorithms for achieving those fantasy functionalities will increase. This requires the

development of powerful programmable architecture for supporting more flexibility. Accordingly, DSP design should be scalable for future use. On the other hand, however, several conventional video coding tools are more specified to some dedicated computation, such as Motion Estimation, DCTIIDCT.. .etc. Moreover, these coding tools are rather computation-intensive. Until now, the computational requirements of these coding tools still dominate the entire computation load. Namely, the system bottleneck would take place over here without optimal design considerations. Design efforts should be spent on these computation-intensive tasks to achieve the maximum optimization. By considering the computation behavior of these tasks, hardware acceleration could be the best solution for implementing these coding tools in terms of system performance. Taking both programmability and computational requirements into considerations, system architecture for MPEG-4 should be configured as two major computation units: a programmable DSP core and a dedicated engine to achieve its maximum performance. Figure 7 shows the proposed system architecture of a multimedia processor that targets MPEG-4 Video coding. The dedicated engine can be optimally designed by applying the design techniques, such as data- reuse and parallel computing, so as to achieve better performance. Table 3 shows different speedups for Motion Estimation tool by different architectures respectively. Since the full-search Motion Estimation algorithm has very good data locality, data-reuse can be applied very efficiently on the systolic array architecture [12]. Thus, they can achieve very much speedup. Generally speaking, the systolic array architecture can be treated as a SIMD processor. On the other hand, although several narrow-scene SIMD DSP processors [IO] have been reported that they can achieve much better performance on some video algorithms, such as MC, DCT/IDCT, the speedup is still not enough for real-time full-search Motion Estimation with large search range.

Memory configuration is another important issue for the system design. Therefore; the features of data storage and transfer for video processing tools in MPEG-4 core profile are analyzed so as to design efficient memory system. Table 4 shows the data transfer based on local memory storage analysis for the tools used in MPEG-4. A few tools require large amount of memory storage and data transfer including: Motion Estimation tool, DCTiIDCT tool, Motion Compensation tool and Padding tool. It's clear that if the sufficient local storage is provided, some data transfer overhead can be removed since several tools have data locality within an 8x8 block. Table 4 also shows that motion estimation tool dominates the cost of data transfer in MPEG-4 video processing. This leads to very much memory bandwidth and storage to be consumed. Hierarchical memory system can be employed to remove this problem. For the full-search motion estimation with macroblock size N and search range p: the minimum intermediate local buffer size to achieve minimum main memory access for previous frame data can be derived as the following equations.

Frame memory access: 3xH(width)xV(height)

Iniermediaie buffer: m a { (2p- l)x(2p+N- 1); (2N- I)x(2p+N- I)} This can much reduce the main memory access and thus reduce the heavy traffic of system bus.

Based on the various analyses to MPEG-4 video processing described before, we think that efficient system architecture for MPEG-4 video coding should be configured as follows. A powerful dedicated coprocessor for full-search motion estimation, a parallel DSP core for support flexible functions as well as on-chip memories as iocal data buffers to reduce access from large frame memory.

V.

Conclusion

In this paper, the computational behavior of MPEG-4 video codec is

analyzed by utilizing the proposed behavior model and runtime profiling. Based on the computational behavior analyses and data flow exploration, the design space for efficient architecture, including computing units and memory sub-systems is explored. A hybrid system architecture is considered to be the most efficient architecture for MPEG-4 Video coding since the maximum optimization is applied on it based on the complete exploration of computation behavior of the MPEG-4 Video coding.

Dedicated P a r a l l e l Engine

Reference

“Video Coding for narrow telecommunication channels at < 64 kbitsis,” Ora$ [TU-TRecommendation H.263: July 1995.

Didier Le Gall. “MPEG: A Video Compression Standard for Multimedia Applications,” Communications of the ACM. Vol.

34, No. 4: pp.46-58: April 1991

ISOIIECIJTCI/SC29/WG11 Draft CD 13818-2

Recommendation H.262 Committee Draft.

ISOiIEC JTC IiSC29iWG I I: N2502a, Generic Coding of

Audio-visual Objects: Visual 14496-2, Final Draji IS: Atlantic

City, Dec. 1998.

Jens P. Wittenburg, P. Pirsh and Gerald Meyer: “A Multithreaded Architecture Approach to Parallel DSPs for High Performance Image Processing Applications”, Proc. of 1EEE

Workshop on Signal Processing Systems, 1999.

ROB KOENEN, “Profiles and Levels in MPEG-4;Approach and Overview”: MPEG HOME WEB: 1999

Proqrauunable DSP Core

I I I

Video Tool

Memory I/F, DMA Controller, Central Controller (Scheduller)

I

I

Data Transfer (LM<->GM) (MBytes) Basic Model (LM for 8x8 block data

Local Memory

hs!,

(FS,-16-+15) Motion Comp. CAE Encoding CAE Decoding 13259.981 13259.981 36.3547 36.3547 778.56768 < 778.56768 12.1404 1.1404 I I I I I I DCTI

57.74304 18.24168N. Brady. F. Bossen and N. Murphy, “Context-based Arithmetic Encoding of 2D Shape Sequences”, Proc. of IEEE Confen?nce on Image Processing [lCIP), 1997

MPEG-4 VM software, European ACTS project MoMuSys, Apr. 1999.

Tatsuji M., Hiroshi S.: Takashi M.: and Ichiro K.: “Real-‘Time Software Video Codec with a Fast Adaptive Motion Vector Sesrch”, Proc. of IEEE Workshop on Signal Processing Systems,

1999.

KOUHEI NADEHARA, HANNO LIESKE and ICHIRO

KURODA, “Software MPEG-2 Video Decoder on a 200-MHz; Low Power Multimedia Microprocessor”, Proc. of IEEJ? Int. Conf Acoustics, Speech, Signal Processing, Seattle, May. 1998

Dutta S.; Wolfe A., Wolf W.: OConnor K.J., “Design Issue for Very-Long-Instruction Word VLSI Video Signal ‘Processors”:

VLSISignal Processing IX: IEEE Press, pp.95-104, 1996.

Jun-Fu Shen, Liang-Gee Chen, Hao-Chieh Chang and Tu-Chih Wang, “Low Power Full-Search Block-Matching hllotion Estimation Chip for H.263+ Video Coding”, Proc. of

International Symposium on Circuits and Systems [KCAS):

1999

Chang-Guo Zhou et.al, “MPEG Video Decoding with the UltraSPARC Visual Instruction Set”, Compcon’95, 5-9 March

1995

Required computation (MIPSiMcycles)

Table 1. Computational behavior of MPEG-4 video encoder tools (CPL2) 2395.95 4716.35 0.09569 (Mcycles) 18663.093 (Mcycles) Speedups 7.78 4 195 1 IDCT

I

57.743041 18.24768 IS/IQ 18.247681 0 lS/QI

18.247681 0 VLCI

0.25( 0.25 VLD 0.2sl 0.25 [PaddingI

15.20611 15.2064jTable 4. Amount of data transfer for MPEG-4 video processing in different models

Table 3 Speedups of different architectures for full-search motion estimation